Learning a Discriminative Model for the Perception of Realism in Composite Images

Jun-Yan Zhu, Philipp Krähenbühl, Eli Shechtman, Alyosha EfrosICCV 2015

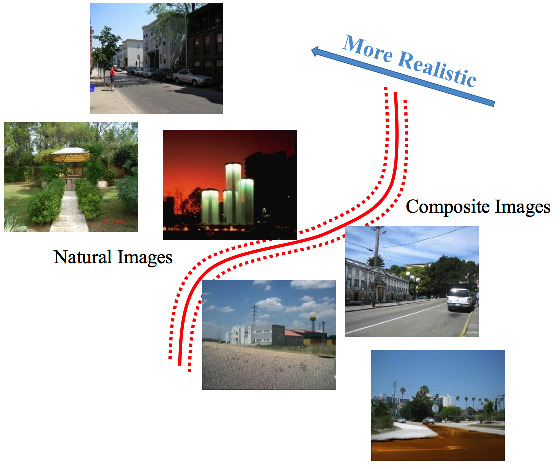

What makes an image appear realistic? In this work, we are looking at this question from a data-driven perspective, by learning the perception of visual realism directly from large amounts of unlabeled data. In particular, we train a Convolutional Neural Network (CNN) model that distinguishes natural photographs from automatically generated composite images. The model learns to predict visual realism of a scene in terms of color, lighting and texture compatibility, without any human annotations pertaining to it. Our model outperforms previous works that rely on hand-crafted heuristics for the task of classifying realistic vs. unrealistic photos. Furthermore, we apply our learned model to compute optimal parameters of a compositing method, to maximize the visual realism score predicted by our CNN model. We demonstrate its advantage against existing methods via a human perception study.